The Viable Validation Stack

Teams that combine synthetic feedback with real customer insight can eliminate weak ideas earlier, iterate with greater confidence, and focus human research where it matters most.

Industry Trends

Teams that combine synthetic feedback with real customer insight can eliminate weak ideas earlier, iterate with greater confidence, and focus human research where it matters most.

Industry Trends

AI has fundamentally changed how products are built.

Small teams can now generate ideas, create prototypes, and launch new features faster than ever before. What once required months of engineering effort can now happen in days.

But something important hasn’t changed.

The way most teams learn whether those ideas are good.

Recruit users.

Schedule interviews.

Run usability tests.

Synthesize insights.

All of these are valuable. But they were designed for a slower world.

Today we live in a strange moment in product development: teams can build faster than they can learn.

And when that happens, speed becomes dangerous. You don’t necessarily build better products—you simply ship the wrong things faster.

To keep up with modern product velocity, the way we gather feedback has to evolve.

Most teams rely on a single source of product feedback: real users.

That makes sense. Talking to customers has always been the gold standard of product discovery. Real people reveal emotional reactions, hidden motivations, and unexpected insights that internal teams simply can’t see on their own.

But real user research also has limitations.

It takes time to recruit participants.

Scheduling interviews slows down iteration.

Small sample sizes introduce a lot of variance.

And perhaps most importantly, many teams use real users for the wrong job.

They use them to eliminate weak ideas.

That’s backwards.

Real users are incredibly valuable for refining strong ideas. But they are inefficient at filtering through early-stage concepts that should have been evaluated long before customers ever see them.

In a world where product development is accelerating, we need a feedback model that works at the same speed.

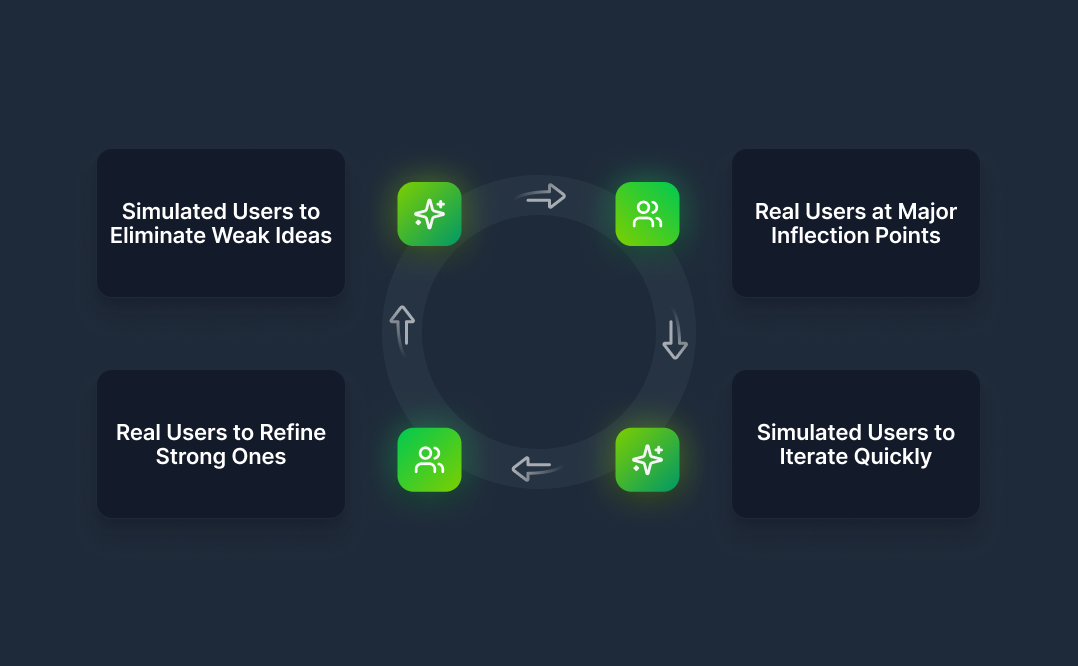

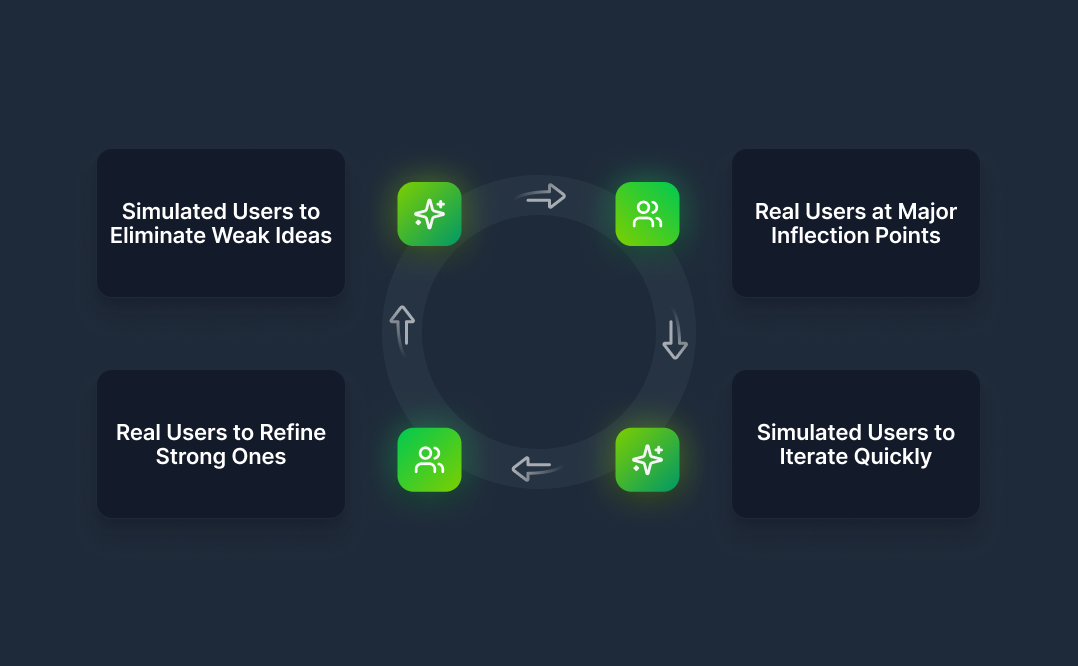

At Viable we think about product feedback as a system rather than a single activity.

Different stages of product development require different kinds of insight. And when you layer those insights together, you create something far more powerful than any single research method.

We call this the Viable Research Stack.

It looks like this:

This isn’t about replacing real user research. It’s about using the right tool at the right moment in the product lifecycle.

Early in a product cycle, teams are rarely deciding between one idea and none. More often they are deciding between multiple directions.

Different positioning angles.

Different value propositions.

Different feature priorities.

At this stage, the goal isn’t deep emotional insight. The goal is simply to determine which ideas are worth exploring further.

Synthetic users are extremely useful here because they simulate broad patterns of human behavior. They can help teams evaluate how different persona types might interpret messaging, navigate information hierarchies, or respond to product positioning.

This makes them particularly effective for tasks like comparing homepage messaging, identifying contradictions in product claims, or evaluating whether a value proposition is actually clear.

Synthetic users aren’t trying to perfectly predict what a specific individual will do.

They provide something more practical: directional signal.

In many cases that’s enough to narrow five possible ideas down to two strong ones before bringing real customers into the conversation.

Once a promising direction emerges, the role of real users becomes critical.

This is where teams move beyond structural clarity and begin exploring deeper questions. How does the product make people feel? What concerns might prevent adoption? What unexpected interpretations are emerging?

Real users reveal emotional nuance and lived experience in a way that synthetic systems cannot.

A pricing model might appear perfectly logical when evaluated structurally, but real customers might interpret it as risky or confusing. A value proposition might look differentiated on paper, yet fail to resonate emotionally with the audience it targets.

Synthetic feedback helps determine whether something makes sense.

Real users reveal whether it actually matters.

Once a product is in the market, iteration becomes constant.

Homepage copy evolves.

Onboarding flows change.

Features get repositioned.

Running traditional research cycles for every change isn’t realistic. Teams need faster feedback loops.

This is where synthetic users become valuable again. They allow teams to quickly evaluate structural questions such as whether a new headline communicates the value proposition more clearly, whether an onboarding flow introduces friction, or whether the product still appears differentiated compared to competitors.

Instead of waiting weeks for feedback, teams can identify potential issues early and iterate quickly.

In an environment where AI has accelerated the speed of building, synthetic users help accelerate the speed of learning.

There are also moments when simulation simply isn’t enough.

Major product decisions carry real financial and strategic consequences. These are the points where real customer insight becomes essential.

Examples include launching large paid acquisition campaigns, entering a new market, changing pricing structures, or repositioning the product for a different audience.

These decisions involve emotional reactions, organizational dynamics, and real-world constraints that only actual customers can reveal.

At these inflection points, real user feedback provides the confidence needed to move forward.

The idea of synthetic users often raises skepticism, which is understandable.

But the goal of synthetic users is not to perfectly replicate a specific human being. Instead, they encode broad behavioral patterns that consistently appear in digital product interactions.

Across millions of user experiences we see recurring signals. Ambiguity causes confusion. Cognitive overload increases drop-off. Clear hierarchy improves comprehension. Differentiation captures attention.

Synthetic models simulate how different persona types interpret messaging and product flows based on these patterns.

They don’t provide perfect predictions.

They provide structured insight that helps teams eliminate weak ideas and identify likely friction points before investing significant resources.

A useful analogy is a wind tunnel. A wind tunnel doesn’t perfectly replicate real flight conditions, but it can reveal structural instability long before a plane leaves the ground.

Synthetic users serve a similar role in product development.

The real advantage of the research stack is not simply speed. It’s learning velocity.

Teams that combine synthetic feedback with real customer insight can eliminate weak ideas earlier, iterate with greater confidence, and focus human research where it matters most.

In a world where AI accelerates building, the teams that win will be the ones that accelerate learning.

Synthetic users don’t replace real users.

They simply make real user research more effective.

Because solving the right problem is always better than solving the wrong problem right.